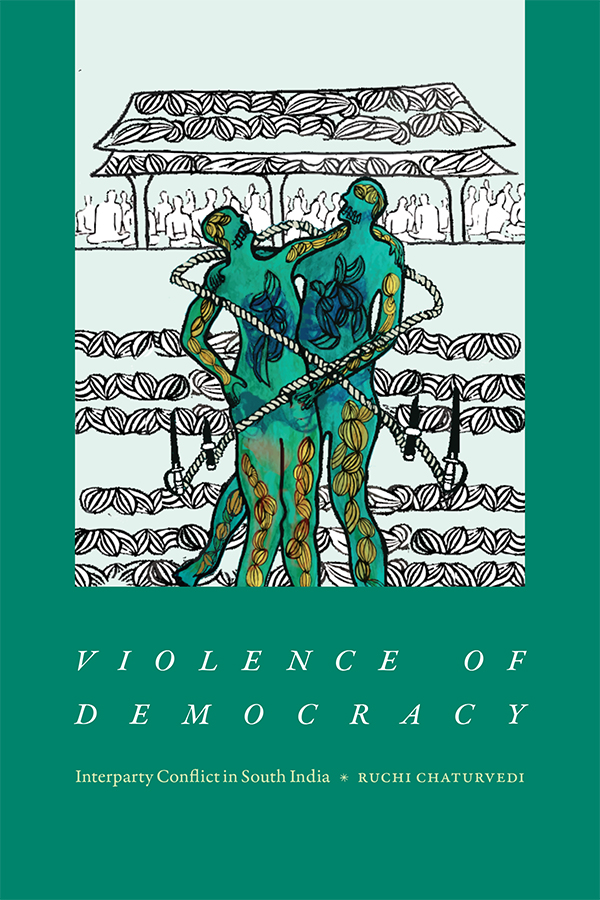

A review of Violence of Democracy: Interparty Conflict in South India, Ruchi Chaturvedi, Duke University Press, 2023.

Violence of Democracy studies a long-standing violent antagonism between members of the party left and the Hindu right in the Kannur district of Kerala, a state on the southwestern coast of India. The term party left refers to members of the Communist Party of India (Marxist) (CPI(M)); the term Hindu right denotes affiliates of the Rashtriya Swayam Sevak Sangh (RSS) and the Bharatiya Janata Party (BJP) that holds power in New Delhi since 2014. The prevalence of violence in Kerala’s political life presents the reader with three paradoxes. First, political scientists view democracy as a pacifying system, as the regime that is most capable of keeping violence at bay. Autocracies are violent by nature; democracies are supposed to be more peaceful, both between themselves (democracies don’t go to war against each other) and within their borders (antagonisms are resolved through the ballot box.) But Ruchi Chaturvedi shows us that democracy can coexist with violence; indeed, that some characteristics of a democratic regime call for the violence it is supposed to contain. As she states in the introduction, “violence, I argue, not only reflects the paradoxes of democratic life, but democratic competitive politics has also helped to condition and produce it.” This criminalization of domestic politics has a long history in Kerala, and Violence of Democracy documents it by revisiting the life narratives of key politicians from the left, by going through judicial cases and media reports of political violence in the Kannur district of Kerala, and by conducting ethnographic interviews with grassroot militants from both parties. This book will be of special interest to social scientists interested in Indian politics as viewed from a southern state that now stands in opposition to the Modi government. But the author also raises disturbing questions for political scientists more generally: is democracy intrinsically violent? What explains the shift from the verbal violence inherent in antagonistic politics to agonistic confrontation that results in acts of intimidation, attempts to murder, and hate crimes? How can violence become closely entwined with the institutions of democracy? How to make political forces accountable for the violence they encourage and the crimes committed in their name? What happens to political violence and its culprits when they are prosecuted through the judicial system and are sanctioned under criminal law?

Violence of Democracy studies a long-standing violent antagonism between members of the party left and the Hindu right in the Kannur district of Kerala, a state on the southwestern coast of India. The term party left refers to members of the Communist Party of India (Marxist) (CPI(M)); the term Hindu right denotes affiliates of the Rashtriya Swayam Sevak Sangh (RSS) and the Bharatiya Janata Party (BJP) that holds power in New Delhi since 2014. The prevalence of violence in Kerala’s political life presents the reader with three paradoxes. First, political scientists view democracy as a pacifying system, as the regime that is most capable of keeping violence at bay. Autocracies are violent by nature; democracies are supposed to be more peaceful, both between themselves (democracies don’t go to war against each other) and within their borders (antagonisms are resolved through the ballot box.) But Ruchi Chaturvedi shows us that democracy can coexist with violence; indeed, that some characteristics of a democratic regime call for the violence it is supposed to contain. As she states in the introduction, “violence, I argue, not only reflects the paradoxes of democratic life, but democratic competitive politics has also helped to condition and produce it.” This criminalization of domestic politics has a long history in Kerala, and Violence of Democracy documents it by revisiting the life narratives of key politicians from the left, by going through judicial cases and media reports of political violence in the Kannur district of Kerala, and by conducting ethnographic interviews with grassroot militants from both parties. This book will be of special interest to social scientists interested in Indian politics as viewed from a southern state that now stands in opposition to the Modi government. But the author also raises disturbing questions for political scientists more generally: is democracy intrinsically violent? What explains the shift from the verbal violence inherent in antagonistic politics to agonistic confrontation that results in acts of intimidation, attempts to murder, and hate crimes? How can violence become closely entwined with the institutions of democracy? How to make political forces accountable for the violence they encourage and the crimes committed in their name? What happens to political violence and its culprits when they are prosecuted through the judicial system and are sanctioned under criminal law?

Violent democracy

The second paradox lies with the root causes of political violence in this district of Kerala. Violence in India is often seen as the result of communal tensions. India’s birth of freedom was bathed in blood: the 1947 partition immediately following independence cut through the fabric of social life, pitting one community against the other. Antagonisms between Hindus and Muslims, or between Hindus and Sikhs, have often led to waves of riots and murderous violence. Beyond the trauma of the partition in which around one million people were killed and 14 million were displaced, mass breakouts of violence include the 1969 Gujarat riots involving internecine strife between Hindus and Muslims, the 1984 Sikh massacre following the assassination of Indira Gandhi by her Sikh bodyguards, the armed insurgency in Kashmir starting in 1989, the Babri Masjid demolition in the city of Ayodhya leading to retaliatory violence in 1992, the 2002 Gujarat riots that followed the Godhra train burning incident, and many other such episodes. If religion was not enough reason to fuel internal conflict, Indian society is also divided along caste, class, race, regional, and ethno-linguistic lines, and these divisions in turn often abet violence and intercommunal strife. But in the Kannur district that Chaturvedi observes, “members of the two groups do not belong to ethnic, racial, linguistic, or religious groups that have been historically pitched against other.” Indeed, “local-level workers of both the party left and the Hindu right involved in the violent conflict with each other share a similar class, religious, and caste background. And yet the contest between them to become a stronger presence and the major political force in the region has generated considerable violence.” The conflict between the two parties in this particular district is purely political. It cannot be read as a conflict between an ethnic or religious majority against a minority community. Its roots lie elsewhere: for Chaturvedi, they are to be found in the very functioning of parliamentary democracy in India.

The third paradox is that this history of violent struggle between the party left and the Hindu right doesn’t correspond to the standard image most people have of Kerala. This state on India’s tropical Malabar Coast is known for its high literacy rate, low infant and adult mortality, and low levels of poverty. Kerala’s model of development gained exceptional global coverage in the 1970s, 1980s, and early 1990s, before the rest of India began to enter into its course of high growth and raising average incomes. Even now, Kerala is ahead of other Indian states in terms of provision of social services such as education and health. Its achievements are not linked to a particular industry, like the IT service sector in Bangalore or the automotive industry in Chennai, but stem from continuous investments in human capital and infrastructure (remittances of Kerala workers employed in Gulf states have also played a role.) Kerala is also known for having self-avowed Marxists occupying positions of power since more than four decades. As Chaturvedi reminds us, “it was the first place in the world to elect a communist government through the electoral ballot in 1957.” Today, the two largest communist parties in Kerala politics are the Communist Party of India (Marxist) and the Communist Party of India, which, together with other left-wing parties, form the ruling Left Democratic Front alliance. They have been in and out of power for most of India’s post-independence history, and are well entrenched in local political life. Communists are sometimes accused of plotting the violent overthrow of the government through revolutionary tactics, and the BJP is not immune to playing with the red scare and accusing its enemies of complotism. But in Kerala violence doesn’t come from revolutionary struggle or armed insurgency; it originates in the very exercise of power. And it didn’t prevent Kerala to become the poster child of development economics, showing that redistributive justice can be achieved despite (or alongside) violent conflict and antagonistic politics.

Malabar traditions

Some observers may explain political violence in Kerala by the intrinsic character of its inhabitants. They point to a traditional martial culture of physical confrontation and warfare. The local martial art, kalaripayattu, is said to be one of the oldest combat technique still in existence. Dravidian history was marked by internecine warfare, the rise and fall of many great empires, and a culture of resistance against northern invaders. The Portuguese established several trading posts along the Malabar Coast and were followed by the Dutch in the 17th century and the French in the 18th century. In French, a “malabar” still means a muscular and sturdy character, although the name seems to come from the indentured Indian workers who came to toil in sugarcane fields of the Réunion island. The British gained control of the region in the late 18th century. The Malabar District was attached to the Madras Presidency, while the other two provinces of Travancore and Cochin, which make up the present-day Kerala, were ruled indirectly through a series of treaties reached with their princely authorities in the course of the 19th century. Direct rule in Malabar reinforced landlord domination over sharecroppers and tenants, with the landlords belonging to the upper-caste Nairs and Nambudiris while tenant cultivators and agricultural workers were the purportedly inferior Thiyyas, Pulayas, and Cherumas. In the early 20th century, social tensions were rife, voices were calling for land reform and the end of caste privilege, and Kerala became the breeding ground for the cadres and leaders of the Communist Party of India (CPI), officially founded on 26 December 1925. Communism is therefore heir to a long tradition of militancy in Kerala. India is home to not one but two communist parties, the CPI and the CPI(M), the second born of a schism in 1964 and sending more representatives to the national parliament than the first.

Instead of essentializing a streak of violence in India’s and Kerala’s political life, Chaturvedi explains the violent turn of electoral politics in the district of Kannur as the result of majoritanianism, the adversarial search to become a major force in a local political system, and its correlate minoritization, the drive to marginalize proponents from the minority party. The search for ascendance is not extraneous to democracies but is part of their basic definition and structure. In Kerala, politics turned violent precisely because the main political forces, and especially the party left and the Hindu right, agreed to play by the rules of democracy. The acceptance of democracy’s rules-of-the-game, namely free and fair elections and majority rule, wasn’t a preordained result. At various points in its history, the communist movement in India was tempted by insurgency tactics and armed struggle. Chaturvedi revisits the political history of Kerala by drawing the portrait of two leaders of the political Left, using their autobiographies and self-narratives. Both A.K. Gopalan (“AKG”) and P.R. Kurup were upper-caste politicians who identified with the plight of poor peasants and lower-caste workers. In 1927, Gopalan joined the Indian National Congress and began playing an active role in the Khadi Movement and the upliftment of Harijans (“untouchables” or Dalits). He later became acquainted with communism and was one of 16 CPI members elected to the first Lok Sabha in 1952. Gopalan’s life narratives “privilege spontaneous moral reactions marked by a good deal of physical courage and a strong sense of masculinity.” He was a party organizer, anchoring the CPI and then the CPI(M) in the political life of Kerala, and a partisan of electoral politics, discarding the temptation to engage in armed insurrection in 1948-1951 as “adventurist” or “ultra-left.” Thanks to his heritage, the CPI(M) now resembles other parties normally seen in parliamentary democracies: “each one seeking to obtain the majority of votes in order to ascend to the major rungs of government.” But P.R. Kurup embodies a darker side of electoral politics: known as “rowdy Kurup,” he remained a regional socialist leader through strong-arm tactics and the occasional streetfight operation against rival supporters of the CPI or the Congress. His band of low-caste supporters (“Kurup’s rowdies”) were willing to use intimidatory and violent means so that their party remained on top.

From agonistic contest to antagonistic conflict

Both Gopalan and Kurup were “shepherds” or “pastoral leaders” who protected, saved, and facilitated the well-being of a populace that reciprocated their favors with votes and other expressions of support. By contrast, the next generation of local leaders to which Chaturvedi turns come from a lower rung of society. They are the militant members and local cadres of the CPI(M) and the RSS-BJP who form antagonistic communities willing to attack and counterattack each other so that their party might dominate in the electoral competition. The fact that young men at the forefront of the conflict between the party left and the Hindu right in the district of Kannur share similar religious, caste, and class backgrounds makes it exceptional. Conflict between the two groups cannot be read as a conflict between an ethnic or religious majority against a minority community. But this distinctive form of political violence in Kannur can be characterized as an exceptional-normal phenomenon, an expression of something common in all democracies: competition for popular and electoral support creates the conditions and ground for the emergence of hate-filled and vengeful acts of violence between opposing political communities. The clashes between the two camps are not just occasional: exploiting various sources such as police and court records as well as personal interviews with workers from the two groups, the author estimates that more than four thousand workers of various parties have been tried for political crimes in Kannur in the past five decades. Assailants used weapons such as iron rods, chopping knives, axes, crude bombs, sword knives (kathival), sticks, and bamboo staffs (lathi). They formed tight-knit communities of young men sharing fraternal bonds and a spirit of strong cohesion: the RSS shakha (local branch network) is the most organized structure from the Hindu right, but the party left also has its volunteer vigilante corps akin to RSS cadres or student wing trained in “self-defense techniques.” For both camps, a cycle of attacks and counterattacks breeds mimetic violence and a culture of aggression and vengeance.

In a functional democracy, law and order is maintained and crime gets punished. Many young men from the party left and the Hindu right have been brought to court on suspicion of politically motivated crimes and sanctioned accordingly. But for Chaturvedi, law is a “subterfuge” that obfuscates the complicity of the democratic political system in brewing violence and offers it an “alibi” or a “free pass.” Justice is the continuation of politics by other means, and the conflict between the CPI(M) and the RSS-BJP in Kannur is being reenacted in the courts. The judicial system depoliticizes political violence by projecting responsibility onto individuals and exonerating political structures of any responsibility for the crimes committed in their name. Perpetrators of violent aggression are liable under criminal law and judges don’t take into account their political motivations, pointing instead to acts of madness or a background of criminal delinquency. Political parties from both sides do not remain inactive during trials: they tutor witnesses to produce convincing testimonies or offer alibis, they create suspicion about testimonies of the opposite party, they fabricate evidence and manipulate opinion. Judicial proceedings take an exceedingly long time due to juridical maneuvers, and suspects are often acquitted for lack of evidence. Important local figures thought to be planning and facilitating the aggressions are not called to account. In addition, according to Chaturvedi, the judicial system in India has taken a majoritarian turn: it affords impunity to members of the dominant group while persecuting minorities and those who challenge its hegemony. In Kerala, it did not stop generations of young men to engage in attacks and counterattacks so that their party can stay on top. Depoliticizing political violence and obscuring the conditions that have produced it not only leaves political forces unaccountable: it perpetuates a cycle of aggression and impunity. For the author, a true political justice should not reduce political violence to individual criminality, but should address the structures that underlie it.

Majoritarianism and minoritization

For Chaturvedi, electoral democracy is defined by the competition “to become major and make minor,” or the imperative “to become a major political force and reduce the opposition to a minor position.” In a first-past-the-post electoral system, the party that commands the greatest number of votes in the greatest number of constituencies obtains greater legislative powers and access to executive authority. There is a built-in incentive to conquer and vanquish, as political opponents are seen as an obstacle in the road to power. Democracy therefore has a propensity to divide, polarize, hurt, and generate long-term conflicts. In the district studied by the author, democracy has facilitated the emergence of violent majoritarianism and minoritization, understood as “practices that disempower a group in the course of establishing the hegemony of another.” Most modern democracies make accommodations to protect minorities, but they also continue to uphold rule of the majority as the source of their legitimacy. The founding fathers of modern India, from Syed Ahmad Khan to Mahatma Gandhi to B.R. Ambedkar, were aware of this risk of majority rule and sought to mitigate it by building checks-and-balances and appealing to the better part of people’s nature. Initially a proponent of Hindu-Muslim unity, Sir Syed wrote about the “potentially oppressive” character of democracy, fearing that it might translate into “crude enforcement of majority rule.” Gandhi not only warned against the workings of competitive politics and the dangers of majoritarianism, but also expressed skepticism about the rule of law and impartiality of the judicial system. Ambedkar wrote principles of political freedom and social justice into the Indian constitution, but was keenly aware that democracies were by definition a precarious place for social and numerical minorities. Although their solutions may not be ours, Chaturvedi concludes that “we need to attend to questions that figures like Sir Syed, Ambedkar, and Gandhi raised.”

We are tirelessly reminded that India is “the world’s largest democracy.” In times of general elections, like the one taking place from 19th of April to 1st of June 2024, approximately 970 million people out of a population of 1.4 billion people are called to the ballot box in several phases to elect 543 members of the Lok Sabha, the lower house of India’s bicameral parliament. The election garners a lot of international attention. For some, it is the promise that democracy can flourish regardless of economic status or levels of income per head: India has been one of the poorest country in the world for much of the twentieth century, and yet has never reneged on its democratic pledge since independence in 1947. For others, it is the proof that unity in diversity is possible, and that nations divided along ethnic, religious, or regional lines can manage their differences in a peaceful and inclusive way. Still for others, India is not immune to the populist currents menacing democracies in the twenty-first century. For some observers, like political scientist Christophe Jaffrelot, India’s elections this year stand out for their undemocratic nature, and democracy is under threat in Narendra Modi’s India. And yet India is a functional democracy where citizens participate in voting at far higher rates than in the United States or Europe. Lisa Mitchell’s book Hailing the State draws our attention to what happens to (as the book’s subtitle says) “Indian democracy between elections.” Except during general election campaigns, foreign media’s coverage of Indian domestic politics is limited in scope and mostly concentrates on the ruling party’s exercise of power in New Delhi. Whether this year’s elections are free and fair will be considered as a test for Indian democracy. But as human rights activist G. Haragopal (quoted by the author) reminds us, “democracy doesn’t just means elections. Elections are only one part of democracy.” Elected officials have to be held accountable for their campaign promises; they have to listen to the grievances of their constituencies and find solutions to their local problems; they have to represent them and echo their concerns. When they don’t, people speak out.

We are tirelessly reminded that India is “the world’s largest democracy.” In times of general elections, like the one taking place from 19th of April to 1st of June 2024, approximately 970 million people out of a population of 1.4 billion people are called to the ballot box in several phases to elect 543 members of the Lok Sabha, the lower house of India’s bicameral parliament. The election garners a lot of international attention. For some, it is the promise that democracy can flourish regardless of economic status or levels of income per head: India has been one of the poorest country in the world for much of the twentieth century, and yet has never reneged on its democratic pledge since independence in 1947. For others, it is the proof that unity in diversity is possible, and that nations divided along ethnic, religious, or regional lines can manage their differences in a peaceful and inclusive way. Still for others, India is not immune to the populist currents menacing democracies in the twenty-first century. For some observers, like political scientist Christophe Jaffrelot, India’s elections this year stand out for their undemocratic nature, and democracy is under threat in Narendra Modi’s India. And yet India is a functional democracy where citizens participate in voting at far higher rates than in the United States or Europe. Lisa Mitchell’s book Hailing the State draws our attention to what happens to (as the book’s subtitle says) “Indian democracy between elections.” Except during general election campaigns, foreign media’s coverage of Indian domestic politics is limited in scope and mostly concentrates on the ruling party’s exercise of power in New Delhi. Whether this year’s elections are free and fair will be considered as a test for Indian democracy. But as human rights activist G. Haragopal (quoted by the author) reminds us, “democracy doesn’t just means elections. Elections are only one part of democracy.” Elected officials have to be held accountable for their campaign promises; they have to listen to the grievances of their constituencies and find solutions to their local problems; they have to represent them and echo their concerns. When they don’t, people speak out.

The relations between science and fiction have nowhere been any closer than on the planet Mars. The genre of science fiction literally began with imagining life on Mars; and some of its most popular entries nowadays are stories of how humans could settle on the red planet and make it more like the Earth. Planetary science originally took Mars as its object and tried to project onto Mars what scientists knew about the climate and geology on Earth. Now this interest for Martian affairs is coming back to Earth, as scientists are applying knowledge derived from studying Mars to the study of the Earth’s planetary dynamics. Mars’ image as a dying planet has been invoked to support competing, even antithetical views, of the fate of our world and its inhabitants: a glorious future of interplanetary expansion and space conquest, or a bleak fate of environmental devastation and human extinction. Science has not completely closed the issue on whether life has ever existed on Mars; but visions of extraterrestrial civilizations and space invaders have been superseded by narratives centered on mankind and its cosmic manifest destiny. This intimate relationship between science and fiction under the sign of Mars is now more than one century old, but shows no sign of abating. What is it in Mars that inflames people’s imagination from one generation to the next? Why has Mars attracted more interest than our closest satellite, the Moon, or than more distant planets in the solar system such as Venus or Saturn? Are there commonalities between the way our ancestors envisioned channels built by Martian civilizations and more recent visions of making Mars suitable for human sojourn? Will the detailed inventory of the Martian terrain brought back by satellite images and camera-equipped rovers put an end to our interest for the red planet, or will it rekindle a new space age with the colonization of Mars as its overarching goal? And how can our visions of planetary expansion avoid the pitfalls of colonial metaphors and Earth-based anthropocentrism?

The relations between science and fiction have nowhere been any closer than on the planet Mars. The genre of science fiction literally began with imagining life on Mars; and some of its most popular entries nowadays are stories of how humans could settle on the red planet and make it more like the Earth. Planetary science originally took Mars as its object and tried to project onto Mars what scientists knew about the climate and geology on Earth. Now this interest for Martian affairs is coming back to Earth, as scientists are applying knowledge derived from studying Mars to the study of the Earth’s planetary dynamics. Mars’ image as a dying planet has been invoked to support competing, even antithetical views, of the fate of our world and its inhabitants: a glorious future of interplanetary expansion and space conquest, or a bleak fate of environmental devastation and human extinction. Science has not completely closed the issue on whether life has ever existed on Mars; but visions of extraterrestrial civilizations and space invaders have been superseded by narratives centered on mankind and its cosmic manifest destiny. This intimate relationship between science and fiction under the sign of Mars is now more than one century old, but shows no sign of abating. What is it in Mars that inflames people’s imagination from one generation to the next? Why has Mars attracted more interest than our closest satellite, the Moon, or than more distant planets in the solar system such as Venus or Saturn? Are there commonalities between the way our ancestors envisioned channels built by Martian civilizations and more recent visions of making Mars suitable for human sojourn? Will the detailed inventory of the Martian terrain brought back by satellite images and camera-equipped rovers put an end to our interest for the red planet, or will it rekindle a new space age with the colonization of Mars as its overarching goal? And how can our visions of planetary expansion avoid the pitfalls of colonial metaphors and Earth-based anthropocentrism? On July 9, 2011, South Sudan celebrated its independence as the world’s newest nation. One name considered for christening the country was the Kush Republic, after the Kingdom of Kush that ruled over part of Egypt until the 7th century BC. According to historians of antiquity, Kush was an African superpower and its influence extended to what is now called the Middle East. Placing the new nation under the sign of this prestigious ancestor was seen as particularly auspicious. But for many people the name Kush has been connected with the biblical character Cush, son of Ham and grandson of Noah in the Hebrew Bible, whose descendants include his son Nemrod and various biblical figures, including a wife of Moses referred to as “a Cushite woman.” A prophecy about Cush in Isaiah 18 speaks of “a people tall and smooth-skinned, a people feared far and wide, an aggressive nation of strange speech, whose land is divided by rivers” that will come to present gifts to God on Mount Zion after carrying them in papyrus boats over the water. For many South Sudanese at independence, Isaiah’s ancient prophecy directly applied to them, to the point the newly appointed President Salva Kiir chose Israel as one of his first destinations abroad. Churchgoers also read echoes of their fight for sovereignty and independence in various passages of the Bible. Christian southerners envisioned themselves as a chosen people destined for liberation, while Arabs and Muslim rulers in Khartoum were likened to oppressors in the biblical tradition of Babylon, Egypt, and the Philistines. John Garang, leader of the Sudan People’s Liberation Army/Movement (SPLA/M), was identified as a new Moses leading his people to the promised land. The fact that he left the reins of power to his second-in-command Salva Kiir before independence, just like Moses did with Joshua upon entering the land of Canaan, was interpreted as further accomplishment of the prophecy. Certainly God had a divine plan for the South Sudanese. For some Christian fundamentalists, the accomplishment of Isaiah’s prophecy was a sign of the imminent Second Coming of Jesus Christ that Isaiah identified as the Messiah, the king in the line of David who would establish an eternal reign upon the earth.

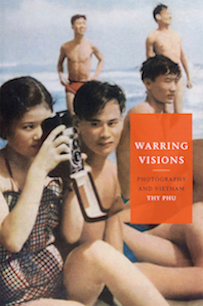

On July 9, 2011, South Sudan celebrated its independence as the world’s newest nation. One name considered for christening the country was the Kush Republic, after the Kingdom of Kush that ruled over part of Egypt until the 7th century BC. According to historians of antiquity, Kush was an African superpower and its influence extended to what is now called the Middle East. Placing the new nation under the sign of this prestigious ancestor was seen as particularly auspicious. But for many people the name Kush has been connected with the biblical character Cush, son of Ham and grandson of Noah in the Hebrew Bible, whose descendants include his son Nemrod and various biblical figures, including a wife of Moses referred to as “a Cushite woman.” A prophecy about Cush in Isaiah 18 speaks of “a people tall and smooth-skinned, a people feared far and wide, an aggressive nation of strange speech, whose land is divided by rivers” that will come to present gifts to God on Mount Zion after carrying them in papyrus boats over the water. For many South Sudanese at independence, Isaiah’s ancient prophecy directly applied to them, to the point the newly appointed President Salva Kiir chose Israel as one of his first destinations abroad. Churchgoers also read echoes of their fight for sovereignty and independence in various passages of the Bible. Christian southerners envisioned themselves as a chosen people destined for liberation, while Arabs and Muslim rulers in Khartoum were likened to oppressors in the biblical tradition of Babylon, Egypt, and the Philistines. John Garang, leader of the Sudan People’s Liberation Army/Movement (SPLA/M), was identified as a new Moses leading his people to the promised land. The fact that he left the reins of power to his second-in-command Salva Kiir before independence, just like Moses did with Joshua upon entering the land of Canaan, was interpreted as further accomplishment of the prophecy. Certainly God had a divine plan for the South Sudanese. For some Christian fundamentalists, the accomplishment of Isaiah’s prophecy was a sign of the imminent Second Coming of Jesus Christ that Isaiah identified as the Messiah, the king in the line of David who would establish an eternal reign upon the earth. In April 2015, the Institut Français in Hanoi held a photography exhibition, Reporters de Guerre (War Reporters), marking the fortieth anniversary of the end of the Vietnam War. Curated by Patrick Chauvel, an award-winning photographer who had covered the war for France, the exhibition showcased the work of four North Vietnamese photographers (Đoàn Công Tính, Chu Chi Thành, Tràn Mai Nam, and Hùa Kiêm) whose documenting of the Vietnam War was often overshadowed by photographers from the Western press working from the South. The poster for the cultural event at L’Espace used an iconic image: a black-and-white picture of North Vietnamese soldiers climbing a rope against the spectacular backdrop of a waterfall, taken in 1970 along the Ho Chi Minh trail. Đoàn Công Tính, the photographer, had caught a moment of timeless beauty and strength, an image of mankind overcoming physical hindrances and material obstacles in the pursuit of a higher goal. However, a scandal erupted when Danish photographer Jørn Stjerneklar pointed out on his blog that this iconic image was doctored. He compared two versions, the recent print that appeared in the exhibition and the “original,” which was published in Tính’s 2001 book Khoảnh Khắc (Moments). Tính apologized profusely for “mistakenly” sending the photoshopped image, claiming that the original negative had been damaged and that he accidentally included a copy of the image with a photoshopped background in a CD to the exhibition’s organisers. But in a follow-up article on his blog, Stjerneklar pointed out that even the “original” had been retouched, as evidenced by the repeating pattern of the waterfall, and was likely a montage of another photograph which is displayed at the War Remnants Museum in Ho Chi Minh City. Stjerneklar’s story was picked up worldwide and ignited a lively debate around the presumed objectivity of photojournalism and the role of photography in propaganda.

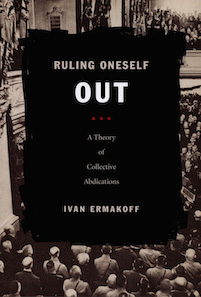

In April 2015, the Institut Français in Hanoi held a photography exhibition, Reporters de Guerre (War Reporters), marking the fortieth anniversary of the end of the Vietnam War. Curated by Patrick Chauvel, an award-winning photographer who had covered the war for France, the exhibition showcased the work of four North Vietnamese photographers (Đoàn Công Tính, Chu Chi Thành, Tràn Mai Nam, and Hùa Kiêm) whose documenting of the Vietnam War was often overshadowed by photographers from the Western press working from the South. The poster for the cultural event at L’Espace used an iconic image: a black-and-white picture of North Vietnamese soldiers climbing a rope against the spectacular backdrop of a waterfall, taken in 1970 along the Ho Chi Minh trail. Đoàn Công Tính, the photographer, had caught a moment of timeless beauty and strength, an image of mankind overcoming physical hindrances and material obstacles in the pursuit of a higher goal. However, a scandal erupted when Danish photographer Jørn Stjerneklar pointed out on his blog that this iconic image was doctored. He compared two versions, the recent print that appeared in the exhibition and the “original,” which was published in Tính’s 2001 book Khoảnh Khắc (Moments). Tính apologized profusely for “mistakenly” sending the photoshopped image, claiming that the original negative had been damaged and that he accidentally included a copy of the image with a photoshopped background in a CD to the exhibition’s organisers. But in a follow-up article on his blog, Stjerneklar pointed out that even the “original” had been retouched, as evidenced by the repeating pattern of the waterfall, and was likely a montage of another photograph which is displayed at the War Remnants Museum in Ho Chi Minh City. Stjerneklar’s story was picked up worldwide and ignited a lively debate around the presumed objectivity of photojournalism and the role of photography in propaganda. How can a majority of parliamentarians vote to renounce democracy? Why would a group accept its own debasement and, in doing so, abdicate its capacity for self-preservation? What induces them not only to surrender power, but also to legitimize this surrender by a vote? Which conjuncture allowed democratically elected officials to rule themselves out and allow authoritarian leaders to take full control? This sad reversal of fortune happened on two occasions in the twentieth century. On 23 March 1933, less than one month after the burning of the Reichstag, German parliamentarians gathered at the Kroll Opera House in Berlin passed a bill enabling Hitler to concentrate all powers in his own hands by a majority of 444 to 94, meeting the two-third majority required for any constitutional change. On the afternoon of 10 July 1940, at the Grand Casino in Vichy, a great majority of French deputies and senators—569 parliamentarians, about 85 percent of those who took part in the vote—endorsed a bill that vested Marshal Pétain with full powers, including authorization to draft a new constitution. In these two cases, abdication was sanctioned by an explicit decision—a vote. Both cases gave authoritarian leaders all powers to sideline parliament, suspend the republican constitution, and rule by decree. Both 23 March 1933 and 10 July 1940 are dates which live on in infamy in Germany and in France. As soon as the events occurred, they were to haunt the elected officials who took part in the decision. To borrow Ivan Ermakoff’s words, these were “decisions that people make in a mist of darkness, the darkness of their own motivations, the darkness of those who confront and challenge them, and the darkness of what the future has in store.” Can we shed light on this darkness?

How can a majority of parliamentarians vote to renounce democracy? Why would a group accept its own debasement and, in doing so, abdicate its capacity for self-preservation? What induces them not only to surrender power, but also to legitimize this surrender by a vote? Which conjuncture allowed democratically elected officials to rule themselves out and allow authoritarian leaders to take full control? This sad reversal of fortune happened on two occasions in the twentieth century. On 23 March 1933, less than one month after the burning of the Reichstag, German parliamentarians gathered at the Kroll Opera House in Berlin passed a bill enabling Hitler to concentrate all powers in his own hands by a majority of 444 to 94, meeting the two-third majority required for any constitutional change. On the afternoon of 10 July 1940, at the Grand Casino in Vichy, a great majority of French deputies and senators—569 parliamentarians, about 85 percent of those who took part in the vote—endorsed a bill that vested Marshal Pétain with full powers, including authorization to draft a new constitution. In these two cases, abdication was sanctioned by an explicit decision—a vote. Both cases gave authoritarian leaders all powers to sideline parliament, suspend the republican constitution, and rule by decree. Both 23 March 1933 and 10 July 1940 are dates which live on in infamy in Germany and in France. As soon as the events occurred, they were to haunt the elected officials who took part in the decision. To borrow Ivan Ermakoff’s words, these were “decisions that people make in a mist of darkness, the darkness of their own motivations, the darkness of those who confront and challenge them, and the darkness of what the future has in store.” Can we shed light on this darkness? The “flash of capital” refers to the way the underlying structure of a national economy “flashes” or reverberates through the films it produces, and how cinema critique can highlight the relations between culture and capitalism, film aesthetics and geopolitics, movie commentary and political discourse, at particular moments of their transformation. A flash is not a reflection or an image, and Eric Cazdyn does not subscribe to the reflection theory of classical Marxism that sees cultural productions as a mirror image of the underlying economic infrastructure. Karl Marx posited that the superstructure, which includes the state apparatus, forms of social consciousness, and dominant ideologies, is determined “in the last instance” by the “base” or substructure, which relates to the mode of production that evolves from feudalism to capitalism and then to communism. Transformations of the mode of production lead to changes in the superstructure. Hungarian philosopher and literary critic György Lukács applied this framework to all kinds of cultural productions, claiming that a true work of art must reflect the underlying patterns of economic contradictions in the society. Rather than Marx’s and Lukács’ reflection theory, Cazdyn’s “flash theory” is inspired by post-marxist cultural theorists Walter Benjamin and Fredric Jameson, and by the work of Japan scholars Masao Miyoshi and Harry Harootunian (the two editors of the collection at Duke University Press in which the book was published). For Cazdyn, how we produce meaning and how we produce wealth are closely interrelated. Cultural productions such as films give access to the unconscious of a society: “What is unrepresentable in everyday discourse is flashed on the level of the aesthetic.” Films not only reflect and explain underlying contradictions but, more importantly, actively participate in the construction of economic and geopolitical transformations.

The “flash of capital” refers to the way the underlying structure of a national economy “flashes” or reverberates through the films it produces, and how cinema critique can highlight the relations between culture and capitalism, film aesthetics and geopolitics, movie commentary and political discourse, at particular moments of their transformation. A flash is not a reflection or an image, and Eric Cazdyn does not subscribe to the reflection theory of classical Marxism that sees cultural productions as a mirror image of the underlying economic infrastructure. Karl Marx posited that the superstructure, which includes the state apparatus, forms of social consciousness, and dominant ideologies, is determined “in the last instance” by the “base” or substructure, which relates to the mode of production that evolves from feudalism to capitalism and then to communism. Transformations of the mode of production lead to changes in the superstructure. Hungarian philosopher and literary critic György Lukács applied this framework to all kinds of cultural productions, claiming that a true work of art must reflect the underlying patterns of economic contradictions in the society. Rather than Marx’s and Lukács’ reflection theory, Cazdyn’s “flash theory” is inspired by post-marxist cultural theorists Walter Benjamin and Fredric Jameson, and by the work of Japan scholars Masao Miyoshi and Harry Harootunian (the two editors of the collection at Duke University Press in which the book was published). For Cazdyn, how we produce meaning and how we produce wealth are closely interrelated. Cultural productions such as films give access to the unconscious of a society: “What is unrepresentable in everyday discourse is flashed on the level of the aesthetic.” Films not only reflect and explain underlying contradictions but, more importantly, actively participate in the construction of economic and geopolitical transformations.

Many public events in the United States and in Canada begin by paying respects to the traditional custodians of the land, acknowledging that the gathering takes place on their traditional territory, and noting that they called the land home before the arrival of settlers and in many cases still do call it home. Cooling the Tropics does not open with such a Land Acknowledgement, but Hi′ilei Julia Kawehipuaakahaopulani Hobart (thereafter: Hi′ilei Hobart) claims Hawai’i as her piko (umbilicus) and pays tribute to the kūpuna (noble elders) and the lāhui (lay people) who “defended the sovereignty of [her] homeland with tender and fierce love.” She describes her identity as “anchored in a childhood in Hawai’i, with a Kānaka Maoli mother who epitomized Hawaiian grace and a second-generation Irish father who expressed his devotion to her by researching and writing our family histories.” She expresses her support for decolonial struggles and Indigenous rights, and participated in protests claiming territorial sovereignty for Hawai’i’s Native population. How can one decolonize Hawai’i? How can Hawaiian sovereignty discourse articulate a claim to land restitution and self-determination that is not a return to a mythic past? What about racial mixing, once regarded with anxiety and now touted as a symbol of Hawai’i’s success as a multicultural US state? What happens to settler colonialism and white privilege when the local economy and the political arena are dominated by populations originating from East Asia and persons of mixed descent? Is economic self-reliance a feasible option considering the imbrication of Hawai’i’s economy into the US mainland’s market? Can the rights of the Indigenous population be better defended in a sovereign Hawai’i? What is the meaning of supporting decolonial futures that include “deoccupation, demilitarization, and the dismantling of the settler state”? Can decolonization be achieved by nonviolent means, or do sovereignty’s activists have to resort to rebellion and armed struggle? What would be the future of a decolonized Hawai’i in a region fraught with military tensions and geopolitical rivalries? What can a decolonial perspective bring to the analysis of Hawai’i’s colonial past and possible futures? And why is academic research on Hawai’i’s history and society so often aligned with the decolonization agenda, to the point that decolonial approaches are almost synonymous with Hawaiian studies in the United States? More to the point: how can a PhD student majoring in food studies and chronicling the introduction of ice water, ice-making machines, ice cream, and shave ice in Hawai’i address issues of settler colonialism, Indigenous dispossession, Native rights to self-determination, and decolonial futures?

Many public events in the United States and in Canada begin by paying respects to the traditional custodians of the land, acknowledging that the gathering takes place on their traditional territory, and noting that they called the land home before the arrival of settlers and in many cases still do call it home. Cooling the Tropics does not open with such a Land Acknowledgement, but Hi′ilei Julia Kawehipuaakahaopulani Hobart (thereafter: Hi′ilei Hobart) claims Hawai’i as her piko (umbilicus) and pays tribute to the kūpuna (noble elders) and the lāhui (lay people) who “defended the sovereignty of [her] homeland with tender and fierce love.” She describes her identity as “anchored in a childhood in Hawai’i, with a Kānaka Maoli mother who epitomized Hawaiian grace and a second-generation Irish father who expressed his devotion to her by researching and writing our family histories.” She expresses her support for decolonial struggles and Indigenous rights, and participated in protests claiming territorial sovereignty for Hawai’i’s Native population. How can one decolonize Hawai’i? How can Hawaiian sovereignty discourse articulate a claim to land restitution and self-determination that is not a return to a mythic past? What about racial mixing, once regarded with anxiety and now touted as a symbol of Hawai’i’s success as a multicultural US state? What happens to settler colonialism and white privilege when the local economy and the political arena are dominated by populations originating from East Asia and persons of mixed descent? Is economic self-reliance a feasible option considering the imbrication of Hawai’i’s economy into the US mainland’s market? Can the rights of the Indigenous population be better defended in a sovereign Hawai’i? What is the meaning of supporting decolonial futures that include “deoccupation, demilitarization, and the dismantling of the settler state”? Can decolonization be achieved by nonviolent means, or do sovereignty’s activists have to resort to rebellion and armed struggle? What would be the future of a decolonized Hawai’i in a region fraught with military tensions and geopolitical rivalries? What can a decolonial perspective bring to the analysis of Hawai’i’s colonial past and possible futures? And why is academic research on Hawai’i’s history and society so often aligned with the decolonization agenda, to the point that decolonial approaches are almost synonymous with Hawaiian studies in the United States? More to the point: how can a PhD student majoring in food studies and chronicling the introduction of ice water, ice-making machines, ice cream, and shave ice in Hawai’i address issues of settler colonialism, Indigenous dispossession, Native rights to self-determination, and decolonial futures?