A review of From Russia with Code: Programming Migrations in Post-Soviet Times, Mario Biagioli and Vincent Antonin Lépinay eds., Duke University Press, 2019.

From Russia with Code is the product of a three-year research effort by an international team of scholars connected to the European University at Saint Petersburg (EUSP). It benefited from the patronage of two important figures: Bruno Latour, who pioneered science and technology studies (STS) in France and oversaw the creation of a Medialab at Sciences Po in Paris; and Oleg Kharkhodin, a Russian political scientist with a PhD from the University of California at Berkeley who served as EUSP’s rector during most of the duration of the study. Based on more than three hundred in-depth interviews conducted from 2013 through 2015, the research project also benefited from a rare window of opportunity offered by political conditions prevalent back then. Supported by a consortium of Western research institutions, it was partially funded by a grant from the Ministry of Education and Science of the Russian Federation for the study of high-skill brain migration. It could build on the solid foundation of EUSP, a private graduate institute whose academic independence is secured by an endowment fund that is one of the biggest in the country. The brain drain of IT specialists was obviously a matter of concern for Russian authorities, as surveys showed that in 2014 the emigration of Russian scientists and entrepreneurs was by a wide margin the highest since 1999. The movement was amplified after 2014 by Russia’s decision to annex the Crimean Peninsula and, in 2022, by its all-in war of aggression against Ukraine. Conditions for fieldwork-based studies and international research projects in Russia would certainly be different today. The book’s chapter on civic hackers illustrates how fast the ground has moved in the past ten years: most of the civic tech projects it describes were affiliated with the foundation created by Alexey Navalny, a Russian opposition leader who was detained in 2021 and died in a high-security prison in February 2024.

From Russia with Code is the product of a three-year research effort by an international team of scholars connected to the European University at Saint Petersburg (EUSP). It benefited from the patronage of two important figures: Bruno Latour, who pioneered science and technology studies (STS) in France and oversaw the creation of a Medialab at Sciences Po in Paris; and Oleg Kharkhodin, a Russian political scientist with a PhD from the University of California at Berkeley who served as EUSP’s rector during most of the duration of the study. Based on more than three hundred in-depth interviews conducted from 2013 through 2015, the research project also benefited from a rare window of opportunity offered by political conditions prevalent back then. Supported by a consortium of Western research institutions, it was partially funded by a grant from the Ministry of Education and Science of the Russian Federation for the study of high-skill brain migration. It could build on the solid foundation of EUSP, a private graduate institute whose academic independence is secured by an endowment fund that is one of the biggest in the country. The brain drain of IT specialists was obviously a matter of concern for Russian authorities, as surveys showed that in 2014 the emigration of Russian scientists and entrepreneurs was by a wide margin the highest since 1999. The movement was amplified after 2014 by Russia’s decision to annex the Crimean Peninsula and, in 2022, by its all-in war of aggression against Ukraine. Conditions for fieldwork-based studies and international research projects in Russia would certainly be different today. The book’s chapter on civic hackers illustrates how fast the ground has moved in the past ten years: most of the civic tech projects it describes were affiliated with the foundation created by Alexey Navalny, a Russian opposition leader who was detained in 2021 and died in a high-security prison in February 2024.

Preventing the brain drain

The research questions framing the project demonstrate how social science can contribute to policy discussions while translating practical issues into scholarly interrogations. The concerns of the Russian authorities that sponsored the project are well reflected in the topics covered and the questions addressed. How can Russia prevent or reverse the brain drain that was perceived as a direct threat to the nation’s sovereignty? How to avoid dependence on Western imports and cultivate world leaders in an industry dominated by the GAFA? Is import substitution in the IT sector a viable strategy, or should the country rely on foreign direct investment and integration into global value chains? Could Russia create its own version of Silicon Valley by encouraging the clustering of industries in special economic zones and technoparks? These questions are reframed and displaced through the lenses of disciplinary studies mobilized by the members of the research team: STS, transition to market theory, economic geography, innovation policy studies, corporate management, migration studies, and so on. But mostly, From Russia with Code helps answer the questions that readers familiar with IT all know too well: why are Russian programmers so talented and prized by the market? What explains their unique combination of skills, and how to integrate these skills into a foreign business setting? Is it true that their technical prowess is offset by a lack of managerial skills and poor entrepreneurial spirit? The list of famous Russian IT developers include Andrei Chernov, one of the founders of the Russian Internet and the creator of the KOI8-R character encoding; Andrey Ershov, whose research on the mathematical nature of compilation was recognized with the prestigious Krylov Prize; Mikhail Donskoy, a leading developer of Kaissa, the first computer chess champion; Alexey Pajitnov, inventor of Tetris; and Yevgeny Kaspersky, founder of cybersecurity and anti-virus provider Kaspersky Lab. Russia is one of the few countries that is not dominated by Google, Facebook, and WhatsApp, but that has developed its own search engine (Yandex), social network (VKontakte) and message app (Telegram). As a last question that lurks into readers’ minds: what are Russian hackers really up to, and should we be afraid of their cyberattack capabilities?

The standard diagnosis on Russia’s IT capacity is framed by transition theory and posits that “Russians historically have been good at invention but poor at innovation.” Russian computer scientists built successful academic careers outside their homeland, and many global technological giants such as Apple, Google, Intel, Microsoft, or Amazon retain Russian programers as valuable talents. Yet Russian IT entrepreneurs are scarce either in Russia or abroad, and outstanding success stories are the exception rather than the rule. It took one generation to produce a Sergey Brin, co-founder of Google, who arrived in the United States at the age of six where his Russian Jewish parents typically pursued a teaching and research career instead of turning to the corporate world. The virtuosity of Russian software programmers is often explained by their high-level training in mathematics and pure science. The Soviet Union maintained a top-class scientific apparatus, from the fizmat model high schools specializing in math and physics to the dense network of research institutes, science cities, and elite academic institutions like the Academy of Sciences. This strong institutional basis translated into a high number of Nobel prizes and science olympiad laureates. Russian IT developers are praised for their deep interest and immersion in research, an inventive turn of mind, the ability to think independently and offer innovative solutions, and their intuitive grasp of complex problems. But they are also lambasted for their lack of management and entrepreneurial skills. Management was something to which Soviet scientists and science students had virtually no exposure. Even now, business culture is still perceived by many in the community as a superfluous and even disingenuous element. According to the standard view, Russian tech specialists are often interested mainly in new and technically exciting projects, to the point where they disregard the interest of their clients. They tend to think that if an idea is good technically, it will automatically translate into commercial success. They are criticized for a lack of business acumen, poor business etiquette, a certain intolerance for risk, a limited sense of the global market, and disinterest in management issues, which they see as “bullshit.”

Lack of management skills

The studies assembled in From Russia with Code both validate and complicate this diagnosis. Russian IT specialist are certainly heirs to a tradition that values the plan over the market, pure science over applied technology, and developing elegant responses to abstract questions over providing practical solutions to specific problems. Technical skills can be acquired using brute force and a sound foundation in basic science; management culture is taking much longer to cultivate and is more reliant on “soft skills.” The history of computer science in the Soviet Union lies at the root of the differences in programming cultures between East and West. As long as informatics remained a basic science akin to applied mathematics, Soviet scientists remained at the forefront of the discipline. Although cybernetics was initially perceived as an American “reactionary pseudoscience,” it quickly became part of a vision of a socialist information society. As in the United States, early computers were intended for scientific and military calculations. A universally programmable electronic computer known as MESM was created in 1950 by a team of scientists directed by Sergey Lebedev at the Kiev Institute of Electrotechnology. Electrical engineering and programming was one of the few careers in the Soviet Union that was relatively open to Jews and to women: hence their large numbers in these professions. The engineering education was fairly broad, with heavy emphasis on mathematics and physics, but without much foundation in computers: according to one former student, “learning to program without computers was akin to learning to swim without water.” Hardware limitations forced Soviet programmers to write programs in machine code until the early 1970s. By that time, the Soviet government decided to abandon development of original computer designs and encouraged cloning of existing Western systems. A program to expand computer literacy in Soviet schools was one of the first initiatives announced by Mikhail Gorbachev after he came to power in 1985. A network of afterschool education centers carrying programming classes for children led to a wide popularity of Basic and other programming languages.

A half century’s worth of Soviet experience with computing did not just disappear overnight with the end of the Soviet Union. Russians continued to play by the old rules they had internalized in the Soviet economy. The technical skills that Russian software programmers are internationally appreciated for and identified with are skills they have developed through the very specific Russian (and formerly Soviet) educational system. A case study of Yandex, the company behind Russia’s main search engine and the fourth-largest in the world, illustrates how coding socializes IT workers and creates communities of practice aligned with corporate objectives. Computer codes are written in languages that need to be executed by machines, thus leaving no space for semantic ambiguities. At the same time, and for the same reason, there is a specific sociality to code to the extent that lines of code also encapsulate relationships of collaboration, training, and skill transfer. At Yandex, young recruits are encouraged to immerse themselves in the source code of the company and to spot errors or typos for debugging. This way they learn the conventions of the community, all of which are inscribed in the codebase. Face-to-face interactions and oral communication are limited, as developers work from different office buildings and spend most of their time facing their computer screen, writing code or discussing through chatboxes. Yandex has a tradition of writing code without including comments in natural language: the code should be able to “speak for itself” by being accurate, simple, and “clean.” The very first thing every new employee has to learn is how to make code readable and to improve its utility for human readers. As in other programming communities, there is a difference in style between the “mathematicians” who prefer high-level languages such as Python and the “engineers” who favor low-level languages like C++. But projects at Yandex often mix the two approaches, while the corpus they create remains open to criticism and correction. All employees have access to the full codebase of the company and are free to comment on ongoing projects, upholding long-held principles of communal help that hark back to an idealized Soviet past.

Smart cities and technoparks

A key concern of policymakers is to create conditions by which IT industry can flourish. Interventions to promote public-private partnerships and foster cooperation between institutions and actors occur at different scales, from macro to micro: special economic zones, regional corridors, smart cities, creative hubs, technoparks, startup incubators, rentable work-space, and so on. Russia can build upon a model of science promotion that has concentrated resources in isolated science cities and non-teaching research institutions such as the Academy of Sciences. It has been successful at generating scientific breakthrough and achieving technological milestones in fields such as space exploration or the nuclear arms race. However, it has failed consistently in translating scientific discovery into technological innovation and market success. Commercialization was never a priority in the planned economy. In the IT sector, where innovation was increasingly driven by the market, the Soviet Union soon lost its advance in basic science and cybernetics and was reduced to licensing or copying Western technologies. Emerging from the ruins of the Soviet Union, the Russian state had its own particular vision of IT development. It was aiming at not simply imitating the West, but at keeping innovation within state control through authoritarian policy decisions and administrative guidance. But instead of supporting existing science cities and research institutions, the state decided to build a new technological apparatus separate from the Soviet one and inspired by the Silicon Valley model. As a result, Russia got the worst of both worlds: increased competition and the profit motive brought many IT professionals to exit the country in search of more remunerative opportunities, while domestically industrial policy gestured toward Silicon Valley but continued to follow the template of the old Soviet science apparatus. Created with great fanfare by then President Dmitry Medvedev, the Skolkovo “Innovative City” is almost impossible to find on a map and very difficult to go to from Moscow. At the time oof the book’s writing, it was criticized for “inefficiency, corruption, high rents, a complicated architectural plan, and a failing program for the support of startup companies.” Technoparks have been established in many other Russian cities to host both IT startups and larger technology companies. But local authorities are competing against each other through incentives and subsidies programs, while thousands of IT specialists have left the country and are likely never to return. Meanwhile, grassroots initiatives and homegrown developments were annihilated by the state’s attempt to regain control over peripheral regions. In the Russian Far East, a thriving ecosystem built around the online trading of used Japanese cars was suppressed by one stroke of a pen when the Russian state decided to impose a hefty levy over imported cars of more than five years. Other experiments such as Kazan’s self-branding as “the capital of the Russian IT industry” have met with more support from the centralizing state whose priorities are aligned with the interests of local politicians in Tatarstan. However, at present the city plan remains more a layout than a fully functional smart city, and the reader cannot escape the feeling of being led through a Potemkin village by an overtly enthusiastic research guide. It is easy to adopt the jargon of IT success and talk the talk of startup promotion. To walk the walk is another matter.

Russia’s soviet heritage continues to linger in the present. But the Western capitalist model exemplified by Silicon Valley doesn’t represent the sole alternative. Not all Western countries share the same approach to running IT business. Elements of the socialist model, such as an orientation towards social justice, have influenced policies and mindset in Scandinavia, where Russian expatriates appreciate the communalist ethos and the family-friendly environment. Other Russian migrants who have relocated to Boston or to Israel place high value on a corporate capitalist model of large organizations which are both risk-adverse and profit-oriented. As the last article in the book concludes, “the entrepreneurial capitalism of Silicon Valley is not the only game in town.” There are circumstances when a ”socialist” technological model or a “corporate” capitalist model are more applicable than the purely “entrepreneurial” model of IT startups and venture capital. From a Russian perspective, it makes sense to cultivate the tradition of high technical skills and complex problem-solving that constitute Russia’s soviet heritage. Business models that originate ion the academic community are quite distinct from the capitalist motive or profit generation. Even in the West, open source programming and the free software movement have led to sustainable ventures and now undergird a vast portion of today’s internet. Moreover, the lack of entrepreneurial spirit by Russian IT specialists may be due to institutional factors: the lax attitude toward intellectual property, the absence of trust among young professionals, the relative isolation of Russia from global trade patterns, the absence of venture capital and related services to scale up enterprising businesses, the shadow of the criminal economy, etc. According to the authors, the brain drain narrative also needs to be complicated. Experiences of work migration by IT professionals from India or China have demonstrated that the “brain drain” is not an unfixable curse and can instead be viewed as “brain circulation,” with people looking for better conditions regardless of the country. Here again, the profit motive is not the only driver of individual decisions. Student and young researcher mobility is increasingly part of the academic curriculum, and the choice of destination is often motivated by existing collaborative networks or diasporic connexions. Scholars get a first taste of academic life abroad by spending a few months as a postdoctoral student or a guest lecturer before considering more long-term migration options. The same process of migrating step-by-step can also be found in a corporate environment where the decision to relocate is preceded by offshoring contracts and temporary missions. The story of Russian Jewish IT practitioners migrating to Boston during the Soviet period dispels the myth of the “tech maverick” and shows that migrants often have to re-train and upgrade their skills sets before they can find employment in US companies. The concept of brain drain assumes a kind of inherent and fixed value to the “brains” that leave their homeland and settle abroad. In practice however, migration often leads to occupational downgrading, deprofessionalization and de-skilling, as highly educated graduates lacking connexions and job-search skills become employed in low-skilled work or, at best, “upper-middle tech” in big US corporations. The failure to produce technological entrepreneurs among Russian immigrants should not be read as a result of their inability to operate in a capitalist economy or as a lack of entrepreneurial skills. Considering the limited options offered to migrants in a new environment, settling in for a mid-level corporate position in a large corporation instead of starting a new high-risk venture seems like a reasonable option.

The shadow of cyber criminality

In addition to the three models identified by the authors—socialist, entrepreneurial, or corporate—, there is a fourth model that they don’t consider in their essays: the criminal one. Much late-Soviet entrepreneurial activity emerged as an antidote to the country’s collapsing economy, and the idea of “dishonest speculation” was seen as the predominant form of engaging In business activities. From semi-legal market practices to criminal activities, there was only a fine line that many young professionals equipped with IT skills were ready to cross. The same skills that made fizmat school graduates valuable on the IT job market could also be turned toward quick gains in the shadow economy. During Russia’s market transition, the grey zone between legitimate, semi-legal, and illegal activity led to surprising developments, such as a publicly organized conference of avowed criminals that took place at Hotel Odessa in May 2002. The First Worldwide Carders Conference was convened by the administrators of CarderPlanet, a website on the dark web that specialized in mediating between vendors and purchasers of stolen credit card data. In the early age of e-commerce, when American banks and card issuers lagged behind in the chip-and-PIN technology which their European counterparts had developed, “carding” or credit card fraud became a very lucrative activity. Russian fizmat kids with access to a computer and an Internet connexion turned into early-day hackers and cybercriminals. CarderPlanet became the breeding ground of a whole generation who turned to cybercrime for lack of better opportunities in the context of a crumbling economy and a disintegrating state. Later on, these hackers turned to ransomware as the preferred mode of attack and to bitcoin as the privileged means of payment. Russian cybercriminality cannot be understood without appreciating its relationship to Russian national security interests. Early on the FSB, Russia’s secret service, made it clear that any criminal operation against domestic state interests was clearly off-limits and would be met with strong retaliation. Later on, criminal gangs were mobilized into cyber attacks against newly independent states such as Estonia or Georgia. Members of cyber gangs were also recruited into notorious state-backed hacking teams such as APT28 or Unit 26165. Cybercriminals hide behind anonymity services, encrypted communications, middlemen, puppet accounts, and pseudonyms. This makes it challenging for law enforcement agencies, let alone social scientists, to track them or describe their practices. A few facts highlighted by From Russia with Code might however be relevant here. Like conventional Russian software developers, Russian cybercriminals and hackers are likely to value technical prowess and coding virtuosity above all else. For them, code is a political instrument that has the power to challenge geopolitical power relations and capitalist business interests. Code also serves to create groups and communal identities of like-minded professionals, like the software-writing teams at Yandex. Studying their coding style and particular signature may help intelligence agencies to attribute cyberattacks to known actors in Russia, thereby responding to the challenge of attribution in cyber warfare. Like the professionals described in the book, Russian cybercriminals’ relation to the motherland is likely to be transactional. They are also geographically mobile, and need to venture abroad to close some illicit transactions, which gives Western law-enforcement agencies an opportunity to locate them and bring them behind bars. Most participants in the 2002 CarderPlanet Conference have been identified, tracked down, arrested, and condemned by justice.

How to witness a drone strike? Who—or what—bears witness in the operations of targeted killings where the success of a mission appears as a few pixels on a screen? Can there be justice if there is no witness? How can we bring the other-than-human to testify as a subject granted with agency and knowledge? What happens to human responsibility when machines have taken control? Can nonhuman witnessing register forms of violence that are otherwise rendered invisible, such as algorithmic enclosure or anthropogenic climate change? These questions lead Michael Richardson to emphasize the role of the nonhuman in witnessing, and to highlight the relevance of this expanded conception of witnessing in the struggle for more just worlds. The “end of the world” he refers to in the book’s title has several meanings. The catastrophic crises in which we find ourselves—remote wars, technological hubris, and environmental devastation—are of a world-ending importance. Human witnessing is no longer up to the task for making sense, assigning responsibility, and seeking justice in the face of such challenges. As Richardson claims, “only through an embrace of nonhuman witnessing can we humans, if indeed we are still or ever were humans, reckon with the world-destroying crises of war, data, and ecology that now envelop us.” The end of the world is also a location: Michael Richardson writes from a perch at UNSW Sydney, where he co-directs the Media Futures Hub and Autonomous Media Lab. He opens his book by paying tribute to “the unceded sovereignty of the Bidjigal and Gadigal people of the Eora Nation” over the land that is now Sydney, and he draws inspiration from First Nations cosmogonies that grant rights and agency to nonhuman actors such as animals, plants, rocks, and rivers. “World-ending crises are all too familiar to First Nation people” who also teach us that humans and nonhumans can inhabit many different worlds and ecologies. The world that is ending before our eyes is a world where Man, as opposed to nonhumans, was “the unexamined subject of witnessing.” In its demise, we see the emergence of “a world of many worlds” composed of humans, nonhumans, and assemblages thereof.

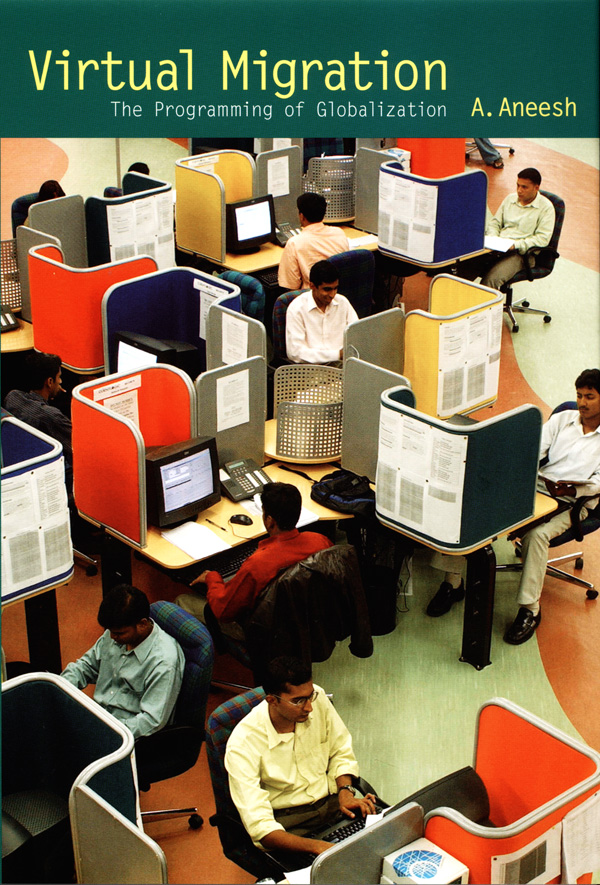

How to witness a drone strike? Who—or what—bears witness in the operations of targeted killings where the success of a mission appears as a few pixels on a screen? Can there be justice if there is no witness? How can we bring the other-than-human to testify as a subject granted with agency and knowledge? What happens to human responsibility when machines have taken control? Can nonhuman witnessing register forms of violence that are otherwise rendered invisible, such as algorithmic enclosure or anthropogenic climate change? These questions lead Michael Richardson to emphasize the role of the nonhuman in witnessing, and to highlight the relevance of this expanded conception of witnessing in the struggle for more just worlds. The “end of the world” he refers to in the book’s title has several meanings. The catastrophic crises in which we find ourselves—remote wars, technological hubris, and environmental devastation—are of a world-ending importance. Human witnessing is no longer up to the task for making sense, assigning responsibility, and seeking justice in the face of such challenges. As Richardson claims, “only through an embrace of nonhuman witnessing can we humans, if indeed we are still or ever were humans, reckon with the world-destroying crises of war, data, and ecology that now envelop us.” The end of the world is also a location: Michael Richardson writes from a perch at UNSW Sydney, where he co-directs the Media Futures Hub and Autonomous Media Lab. He opens his book by paying tribute to “the unceded sovereignty of the Bidjigal and Gadigal people of the Eora Nation” over the land that is now Sydney, and he draws inspiration from First Nations cosmogonies that grant rights and agency to nonhuman actors such as animals, plants, rocks, and rivers. “World-ending crises are all too familiar to First Nation people” who also teach us that humans and nonhumans can inhabit many different worlds and ecologies. The world that is ending before our eyes is a world where Man, as opposed to nonhumans, was “the unexamined subject of witnessing.” In its demise, we see the emergence of “a world of many worlds” composed of humans, nonhumans, and assemblages thereof. A. Aneesh first coined the word algocracy, or algocratic governance, in his book Virtual Migration, published by Duke University Press in 2006. He later refined the term in his book Neutral Accent, an ethnographic study of international call centers in India (which I reviewed

A. Aneesh first coined the word algocracy, or algocratic governance, in his book Virtual Migration, published by Duke University Press in 2006. He later refined the term in his book Neutral Accent, an ethnographic study of international call centers in India (which I reviewed  Lesbian feminists invented the Internet, and they did it without the help of a computer. This is the surprising finding that comes out of the book Information Activism: A Queer History of Lesbian Media Technologies, published by Duke University Press in 2020. As the author Cait McKinney immediately makes it clear, the Internet that lesbians built was not composed of URL, HTML, and IP servers: it was an assemblage of print newsletters, paper index cards, telephone hotlines, paper-based community archives, and early digital technologies such as electronic mailing lists and computer databases. What made these early media technologies “lesbian” is that they formed the information infrastructure of a social movement that Cait McKinney describes as “information activism” and that was oriented toward the needs and aspirations of lesbian women in North America during the 1980s and 1990s. And what makes Cait McKinney’s book a “queer history” is that she brings feminism and queer studies to bear on a media history of US lesbian-feminist information activism based on archival research, oral interviews, and participant observation through volunteering in the Lesbian Herstory Archives in New York. Information activism took many forms: sorting index cards, putting mailing labels on newsletters, answering the telephone every time it rings, converting old archives into digital format… All these activities may not sound glamorous, but they were part of the everyday politics of “being lesbian” and “doing feminism.”

Lesbian feminists invented the Internet, and they did it without the help of a computer. This is the surprising finding that comes out of the book Information Activism: A Queer History of Lesbian Media Technologies, published by Duke University Press in 2020. As the author Cait McKinney immediately makes it clear, the Internet that lesbians built was not composed of URL, HTML, and IP servers: it was an assemblage of print newsletters, paper index cards, telephone hotlines, paper-based community archives, and early digital technologies such as electronic mailing lists and computer databases. What made these early media technologies “lesbian” is that they formed the information infrastructure of a social movement that Cait McKinney describes as “information activism” and that was oriented toward the needs and aspirations of lesbian women in North America during the 1980s and 1990s. And what makes Cait McKinney’s book a “queer history” is that she brings feminism and queer studies to bear on a media history of US lesbian-feminist information activism based on archival research, oral interviews, and participant observation through volunteering in the Lesbian Herstory Archives in New York. Information activism took many forms: sorting index cards, putting mailing labels on newsletters, answering the telephone every time it rings, converting old archives into digital format… All these activities may not sound glamorous, but they were part of the everyday politics of “being lesbian” and “doing feminism.” At the turn of the twenty-first century, China became identified as the world’s factory and India as the world’s call center. Like China, India attracted the attention of journalists and pundits who heralded a new age of globalization and documented the rise of the world’s two emerging giants. Foremost among them, Thomas Friedman wrote several New York Times columns about call centers in Bangalore and devoted nearly half a book, The World is Flat, to reviewing personal conversations he had with Indian entrepreneurs working in the IT sector. He argued that outsourcing service jobs to Bangalore was, in the end, good for America—what goes around comes around in the form of American machine exports, service contracts, software licenses, and more US jobs. He further expanded his optimistic view to conjecture that two countries at both ends of a call center will never fight a war against each other. An intellectual tradition going back to Montesquieu posits that “sweet commerce” tends to civilize people, making them less likely to resort to violent or irrational behavior. According to this view, economic relations between states act as a powerful deterrent to military conflict. As during the Cold War, telecom lines can be used as a tool of conflict prevention: with the difference that the “hot line,” which used to connect the Kremlin to the White House, has been replaced by the “help line” which connects everyone in America to a call center in the developing world. The benefits of openness therefore extend to peace as well as prosperity. In a flat world, nations that open themselves up to the world prosper, while those that close their borders and turn inward fall behind.

At the turn of the twenty-first century, China became identified as the world’s factory and India as the world’s call center. Like China, India attracted the attention of journalists and pundits who heralded a new age of globalization and documented the rise of the world’s two emerging giants. Foremost among them, Thomas Friedman wrote several New York Times columns about call centers in Bangalore and devoted nearly half a book, The World is Flat, to reviewing personal conversations he had with Indian entrepreneurs working in the IT sector. He argued that outsourcing service jobs to Bangalore was, in the end, good for America—what goes around comes around in the form of American machine exports, service contracts, software licenses, and more US jobs. He further expanded his optimistic view to conjecture that two countries at both ends of a call center will never fight a war against each other. An intellectual tradition going back to Montesquieu posits that “sweet commerce” tends to civilize people, making them less likely to resort to violent or irrational behavior. According to this view, economic relations between states act as a powerful deterrent to military conflict. As during the Cold War, telecom lines can be used as a tool of conflict prevention: with the difference that the “hot line,” which used to connect the Kremlin to the White House, has been replaced by the “help line” which connects everyone in America to a call center in the developing world. The benefits of openness therefore extend to peace as well as prosperity. In a flat world, nations that open themselves up to the world prosper, while those that close their borders and turn inward fall behind.  Video games are now part of popular culture. Like books or movies, they can be studied as cultural productions, and university departments offer courses that critically engage with them. Scholars who specialize in this field of study take various perspectives: they can chart the history of video game production and consumption ; they can focus on their design or their aesthetic value; or they can analyze their narrative content and story plot. There is no limit to how video games can be engaged: some thinkers even take them as fertile ground for philosophy and theory building. Within the past few years, a handful of books have been published on video game theory. Colin Milburn’s Respawn can be added to that budding strand of literature. It is a work of applied theory: the author doesn’t engage with longstanding philosophical problems or abstract reasoning, but draws from the examples of a wide range of games, from Portal and Final Fantasy VII to Super Mario Sunshine and Shadow of the Colossus, to illustrate how they impact the lives of gamers and non-gamers alike. In particular, he considers the value of video games for shaping protest and political action. Video games, with the devotion that serious gamers bring to the task, introduce the possibility of living otherwise, of hacking the system, of gaming the game. Gamers and hackers develop alternative forms of participatory culture along with new tactics of critique and intervention. Hacktivist groups such as Anonymous use video game language and aesthetics to disrupt the operations of the security state and launch attacks on the neoliberal order. Pirate parties have won seats in European legislatures and advocate a brand of techno-progressivism, digital liberties, and participatory democracy largely inspired by video games. Exploring the culture of video games can therefore offer a glimpse into the functioning of our modern democracies in a computerized world.

Video games are now part of popular culture. Like books or movies, they can be studied as cultural productions, and university departments offer courses that critically engage with them. Scholars who specialize in this field of study take various perspectives: they can chart the history of video game production and consumption ; they can focus on their design or their aesthetic value; or they can analyze their narrative content and story plot. There is no limit to how video games can be engaged: some thinkers even take them as fertile ground for philosophy and theory building. Within the past few years, a handful of books have been published on video game theory. Colin Milburn’s Respawn can be added to that budding strand of literature. It is a work of applied theory: the author doesn’t engage with longstanding philosophical problems or abstract reasoning, but draws from the examples of a wide range of games, from Portal and Final Fantasy VII to Super Mario Sunshine and Shadow of the Colossus, to illustrate how they impact the lives of gamers and non-gamers alike. In particular, he considers the value of video games for shaping protest and political action. Video games, with the devotion that serious gamers bring to the task, introduce the possibility of living otherwise, of hacking the system, of gaming the game. Gamers and hackers develop alternative forms of participatory culture along with new tactics of critique and intervention. Hacktivist groups such as Anonymous use video game language and aesthetics to disrupt the operations of the security state and launch attacks on the neoliberal order. Pirate parties have won seats in European legislatures and advocate a brand of techno-progressivism, digital liberties, and participatory democracy largely inspired by video games. Exploring the culture of video games can therefore offer a glimpse into the functioning of our modern democracies in a computerized world. Two Bits is a failed anthropology project. It does not make it a bad book: it is well-written and informative, and I learned a lot about Free Software and Open Source by reading it. But it does not meet academic standards that one is to expect from a book published in an anthropology series. These standards, as I see them, pertain to the position of the anthropologist; the importance of fieldwork; the role of theory; the interpretation of facts; and the style of ethnographic writing. Let me elaborate on these five points.

Two Bits is a failed anthropology project. It does not make it a bad book: it is well-written and informative, and I learned a lot about Free Software and Open Source by reading it. But it does not meet academic standards that one is to expect from a book published in an anthropology series. These standards, as I see them, pertain to the position of the anthropologist; the importance of fieldwork; the role of theory; the interpretation of facts; and the style of ethnographic writing. Let me elaborate on these five points.